Effectiveness Versus Efficacy: More Than a Debate Over Language

SOURCE: J Orthop Sports Phys Ther 2003 (Apr); 33 (4): 163–165

Julie M. Fritz, PT, PhD, ATC, Joshua Cleland, PT, DPT, OCS

Department of Physical Therapy,

University of Pittsburgh,

Pittsburgh, PA.

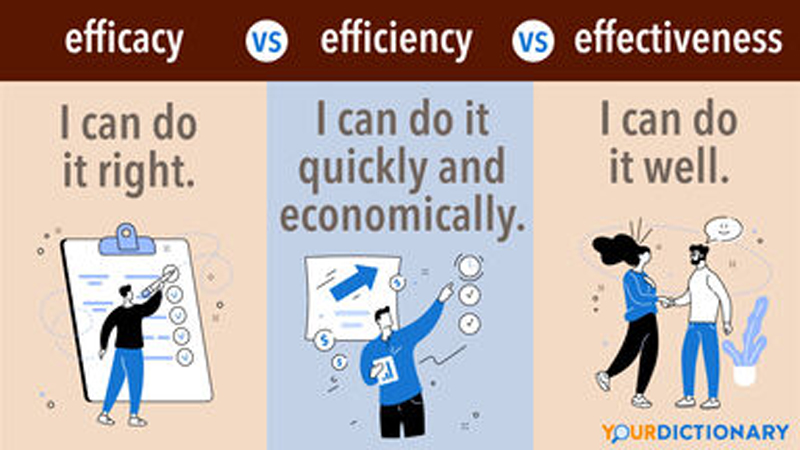

As the physical therapy profession continues the paradigm shift toward evidencebased practice, it becomes increasingly important for therapists to base clinical decisions on the best available evidence. Defining the best available evidence, however, may not be as straightforward as we assume, and will inevitably depend in part upon the perspective and values of the individual making the judgment. To some, the best evidence may be viewed as research that minimizes bias to the greatest extent possible, while others may prioritize research that is deemed most pertinent to clinical practice. The evidence most highly valued and ultimately judged to be the best may differ based on which perspective predominates. One issue that highlights the importance of perspective in judging the evidence is the difference between efficacy and effectiveness approaches to research. These terms are frequently assumed to be synonyms and are often used incorrectly in the literature. There is actually a meaningful distinction between efficacy and effectiveness approaches to research. The distinction is not merely a pedantic concern within the lexicon of researchers, but impacts the nature of the results disseminated by a study, how the results may be applied to clinical practice, and finally how the results are judged by those who seek to evaluate the evidence. [5] Understanding the contrast between effectiveness and efficacy has important and very practical implications for those who seek to evaluate and apply research evidence to clinical practice.

Studies using an efficacy approach are designed to investigate the benefits of an intervention under ideal and highly controlled conditions. While this approach has many methodological advantages, efficacy studies frequently entail substantial deviations from clinical practice in the study design, including the elimination of treatment preferences and multimodal treatment programs, control of the skill levels of the clinicians delivering the intervention, and restrictive control over the study sample. [3, 13] The preferred design for efficacy studies is the randomized controlled trial, frequently employing a no-treatment or placebo group as a comparison in order to isolate the effects of 1 particular intervention. [7] Studies using an efficacy approach have high internal validity and typically score highly on scales designed by researchers to evaluate the quality of clinical trials. However, the generalizability of the results of efficacy studies to the typical practice setting has been questioned. [2] In clinical practice, therapists tend to use many different interventions within a comprehensive treatment program and, therefore, studies investigating the effects of an isolated treatment may appear less useful. In addition, clinical decision making typically entails choices between competing treatment options and, therefore, studies comparing an intervention to an alternative of no intervention (or a placebo intervention) may not seem as directly applicable to the process.

There are more articles like this @ our:

An example of a study using an efficacy approach is a randomized trial by Hides et al. [6] This study examined the effects of exercise on patients with low back pain (LBP). The study used a highly standardized exercise program specifically designed to isolate and strengthen the multifidus muscle. The patient population was restricted to patients with a first episode of unilateral LBP less than 3 weeks in duration. The exercise program was compared to a control group that received no intervention other than medication and advice to remain active. The results of the study favored the group receiving the exercises. The study design permits the conclusion that training the multifidus is beneficial for patients with LBP (versus doing no exercise), however, the generalizability of the results might be questionable.

Relatively few patients seen by physical therapists will be experiencing a first episode of LBP that is less than 3 weeks in duration. Furthermore, therapists may not be as highly trained as the authors of the study in the particular exercise techniques used. Finally, it cannot be determined if the multifidus training program would be better than an alternative exercise program. A physical therapist may choose to utilize this treatment based on the results of the study by Hides et al, [6] however, for all these reasons, the favorable results found in the study may not generalize to the therapist’s own practice

Studies using an effectiveness or pragmatic approach seek to examine the outcomes of interventions under circumstances that more closely approximate the real world, employing less standardized, more multimodal treatment protocols, more heterogeneous patient samples, and delivery of the interventions in routine clinical settings

. [13] Effectiveness studies may also use a randomized trial design, however, the new treatment being studied is typically compared to treatment using the standard of practice for the patient population being studied. [1] Studies using an effectiveness approach tend to sacrifice some degree of internal validity, but have high external validity and are viewed as more applicable to everyday clinical practice. [2]

Leave A Comment